Rodney Brooks took the stage at the 2016 edition of South by South West for an hour long interview. The founder of two world class robotics companies, iRobot and Rethink Robotics and emeritus professor at MIT, he had every right to feel satisfied at this late stage in his career. Yet the air of disappointment in the interview was unmistakeable. “Not much progress was made,” he says at around 35:15 into the video, with the air of Peter Fonda saying “We blew it”. Back in the 1980s Brooks started a revolution in robotics with something called the subsumption architecture, a revolution that has seemingly petered out. This is the story of that revolution, of the eccentrics behind it and whether they really failed, or created the future of robotics.

Author: Simon Birrell

About Simon Birrell

After making video games, VR, comics, films and the net, what's left? Robots.SLAM and Autonomous Navigation with the Deep Learning Robot

Getting your robot to obey “Go to the kitchen” seems like it should be a simple problem to solve. In fact, it requires some advanced mathematics and a lot of programming. Having ROS built into the Deep Learning Robot means that this is all available via the ROS navigation stack, but that doesn’t make it a set-and-forget feature. In this post, we’ll walk through both SLAM and autonomous navigation (derived from the Turtlebot tutorials), show you how they work, give you an overview of troubleshooting and outline the theory behind it all.

Getting your robot to obey “Go to the kitchen” seems like it should be a simple problem to solve. In fact, it requires some advanced mathematics and a lot of programming. Having ROS built into the Deep Learning Robot means that this is all available via the ROS navigation stack, but that doesn’t make it a set-and-forget feature. In this post, we’ll walk through both SLAM and autonomous navigation (derived from the Turtlebot tutorials), show you how they work, give you an overview of troubleshooting and outline the theory behind it all.

Networking the Deep Learning Robot

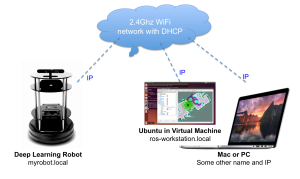

Once you’ve completed the missing instructions for your Deep Learning Robot to get the various “Hello Worlds” running, you’ll soon want try using some of the ROS visualization tools. To do this, you’ll need ROS installed on a laptop or workstation and get it networked with the robot. The Turtlebot tutorials do describe this, but I ran into various issues. Hopefully, this guide will help others who tread the same path.

Overview

This is the setup we’re shooting for:

Robo-makers: Deep Learning Robot Demo – ROS and Robotic Software

Set up your Autonomous Deep Learning Robot to work with the Mac

This short post describes how I’ve set up the Autonomous Deep Learning Robot to work with the Mac. The main things you gain are

- Use of Bonjour / Zeroconf to refer to your robot by name not IP address.

- Transfer of files to and from the Jetson TK1 using Finder on MacOS X.

Autonomous Deep Learning Robot – the missing instructions

The Autonomous Deep Learning Robot from Autonomous Inc is a bargain price Turtlebot 2 compatible robot with CUDA-based deep learning acceleration thrown in. It’s a great deal – but the instructions are sparse to non-existent, so the idea of this post is to both review the device and fill in the gaps for anyone who has just unpacked one. I’ll be expanding the article as I learn more.

First, a little about the Turtlebot 2 compatibility. Turtlebot is a reference platform intended to provide a low-cost entry point for those wanting to develop with ROS (Robot Operating System). ROS is essential for doing anything sophisticated with the robot (e.g. exploring, making a map). There are various manufacturers making and selling Turtlebot-compatible robots all based around the same open source specifications. In actual fact, the only really open source part of the hardware is a collection of wooden plates and a few metal struts. The rest comprises a Kobuki mobile base and a Kinect or ASUS Xtion Pro 3D camera. Read a good interview with the Turtlebot designers here. On top of this, Autonomous throw in a bluetooth speaker and an Nvidia Jetson TK1 motherboard instead of the usual netbook.